If you rely on biometrics for onboarding or authentication, liveness detection (also called presentation attack detection, PAD) is critical to stop biometric spoofing—from printed photos and screen replays to 3D masks and deepfakes. Done right, liveness detection proves there’s a live human at the sensor before any recognition or matching occurs.

Quick Answer: How Liveness Detection Stops Spoofing

Liveness detection distinguishes live biometric signals from presentation attacks (PAs) using either active prompts (e.g., blink, head turn, random words) or passive analysis (e.g., texture, light response, depth cues, micro-movements). ISO/IEC 30107-3 specifies how PAD should be assessed and reported, enabling apples-to-apples vendor comparison.

Definitions and Core Concepts

Presentation attack (PA): Any attempt to subvert a biometric sensor with an artifact (photo, video, mask) or manipulated media (replay, deepfake).

Presentation Attack Detection (PAD): Mechanisms that detect PAs and report results in a standardized way; ISO/IEC 30107-3 sets out test & reporting methods so buyers can compare solutions.

Biometric spoofing has evolved. Early PAs relied on 2D prints; newer attacks use high-resolution OLED replays, textured 3D masks, and AI-generated deepfakes. Modern PAD algorithms analyze multi-signal cues (e.g., skin micro-texture, photometric responses, depth/IR) to decide if a sample is live.

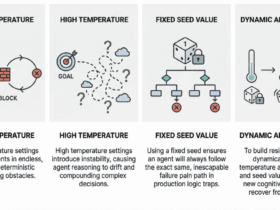

Active vs. Passive Liveness Detection

- Active liveness: The user responds to a prompt—blink, smile, turn left/right, say a phrase. Pros: simple mental model; strong against basic 2D attacks. Cons: adds friction; prompts can be learned/spoofed if naïvely implemented.

- Passive liveness: No prompts. The model infers liveness from natural signals (texture, motion parallax, remote PPG, lens reflections). Pros: great UX; scalable to high-volume KYC. Cons: harder to build; must keep pace with new PAs and deepfakes.

In practice, many platforms combine both via risk-adaptive flows: start passive, escalate to active or multimodal checks when risk is high (e.g., velocity anomalies, TOR, device emulation).

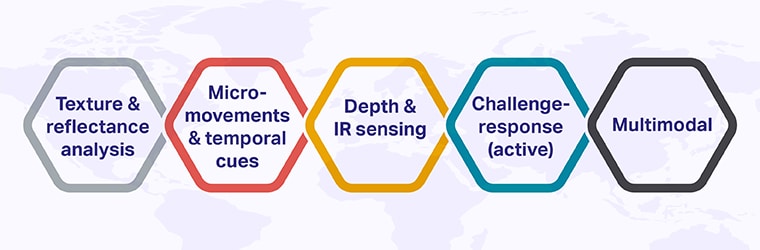

Detection Methods You’ll See in the Field

- Texture & reflectance analysis: Skin exhibits fine-grained micro-texture and photometric responses that differ from displays and printed media.

- Micro-movements & temporal cues: Involuntary eye blinks, subtle head sway, or blood-flow signals across frames are difficult to replay convincingly.

- Depth & IR sensing: Structured light or ToF can make 2D spoofs fail; IR highlights material differences.

- Challenge-response (active): Randomized prompts increase attacker cost.

- Multimodal: Combining face, voice, and device signals can further reduce false accepts.

Vendors describe these techniques differently, but they map to PAD categories recognized in industry literature and buyer guides.

What Are Some Types of Biometric Spoofing?

Different kinds of biometric spoofing match different authentication methods and exploit their weak spots. As a result, presentation attacks can target several biometric modalities, including:

Liveness Detection Use-Cases Across Industries

From banking and crypto to telecom and eGov, these use-cases show liveness stopping spoofing in KYC, high-value transfers, SIM/eSIM flows, digital ID access, and remote exams—keeping fraud out while keeping user friction low.

Liveness Detection That Works: Partner with Shaip

Liveness detection is your first defence against biometric spoofing—from prints and replays to 3D masks and deepfakes. Pair passive-first, risk-adaptive flows with continuous monitoring, and validate performance in your own traffic.

How Shaip helps (proven, production-ready):

- Ready-to-license face anti-spoofing datasets covering 3D mask, makeup and replay attacks, with optional labeling and QA for liveness/PAD model training. Examples include curated video sets, such as the 3D Mask & Makeup Attack collection and Real + Replay libraries, which are sized in the thousands of clips.

- Case study: Delivery of 25,000 anti-spoofing videos from 12,500 participants (one real + one replay each), recorded at 720p+ / ≥26 FPS, with 5 ethnicity groups and structured metadata—built to improve fraud detection robustness.

- Ethically sourced facial image & video data to accelerate training and reduce bias for enterprise face recognition initiatives.

Let’s talk: If you need biometric data collection, Face Recognition Dataset sourcing, or AI data annotation to harden your PAD against emerging attacks, Shaip can scope a fit-for-risk dataset and evaluation plan aligned to your KPIs and compliance needs.