Downtime should be predictable by now. With advanced monitoring systems, cloud infrastructure and AI-driven analytics, IT teams are better equipped than ever. Yet outages still happen without warning and when they do, they disrupt operations, damage customer trust, and cost real money.

So what’s going wrong?

The truth is, downtime isn’t usually caused by a single failure. It’s the result of hidden gaps that build up across systems, tools, and processes. Let’s unpack why IT teams are still getting caught off guard.

The Illusion of Complete Visibility

Most organizations believe they have full visibility into their systems. Dashboards are running, alerts are configured, and logs are being captured in real time.

But visibility doesn’t always mean clarity.

Teams often rely on isolated metrics CPU usage, memory load, latency spikes. These signals, while useful, don’t tell the full story. Without context, they become noise rather than insight.

Here’s where the gap appears:

- Metrics are monitored individually, not collectively

- Alerts trigger after impact begins, not before

- Root cause analysis becomes reactive rather than proactive

What this really means is simple: teams see data, but they don’t always understand it in time.

Growing Complexity, Shrinking Control

Modern IT environments are no longer straightforward. Applications are distributed across microservices, containers, and third-party APIs. Each component introduces its own dependencies and risks.

With this complexity comes a critical challenge—control is no longer centralized.

Key realities of modern infrastructure:

- A single user request may pass through multiple services

- Failures in one component can cascade across the system

- Dependencies often lie outside direct control

As systems evolve, identifying the exact source of failure becomes harder. When something breaks, it feels sudden but in reality, it’s the result of interconnected weaknesses.

Tool Overload Creates Blind Spots

Ironically, having more tools can make teams less effective.

Organizations often deploy multiple platforms for monitoring, logging, performance tracking, and cloud management. While each tool serves a purpose, they rarely integrate seamlessly.

This leads to:

- Fragmented data across platforms

- Lack of a unified system view

- Slower incident diagnosis

Instead of speeding up resolution, teams spend valuable time switching between tools and piecing together information. By the time clarity emerges, downtime has already escalated.

Alert Fatigue Is Undermining Response

Alerts are meant to help—but too many alerts do the opposite.

When engineers are bombarded with constant notifications, they begin to filter them mentally. Low priority or false alerts dilute the importance of critical ones.

The consequences are serious:

- Important warnings get ignored

- Response times increase

- Early signals of failure go unnoticed

Over time, teams stop trusting alerts altogether. And when a real issue arises, it doesn’t get the attention it deserves until it’s too late.

Reactive Mindset Over Proactive Strategy

Many IT teams still operate in a reactive mode. Systems are monitored, but not actively challenged.

Failures often occur in unexpected scenarios—traffic surges, rare bugs, or third-party disruptions. Without proactive testing, these conditions remain unexamined until they occur in production.

Common gaps include:

- Limited use of load testing or chaos engineering

- Over-reliance on pre-deployment testing

- Lack of simulation for real-world stress conditions

What this shows is a deeper issue: stability is assumed, not continuously validated.

Dependency Risks Are Often Invisible

Today’s applications depend heavily on external services payment gateways, APIs, cloud platforms. While these integrations enable scalability, they also introduce risk.

The problem is that these dependencies are often under-monitored.

Key challenges:

- Limited visibility into third-party performance

- No control over external failures

- Delayed detection of upstream issues

When a dependency fails, it impacts your system immediately. But because the issue originates elsewhere, it’s harder to detect and resolve quickly.

Weak Incident Response Frameworks

Even when issues are detected early, response execution can fall short.

In high-pressure situations, uncertainty becomes the biggest obstacle. Teams may not have clearly defined roles, escalation paths, or recovery strategies.

This results in:

- Confusion during critical moments

- Delayed decision-making

- Prolonged downtime

Incident response isn’t just about tools—it’s about preparation. Without regular drills and clear protocols, even skilled teams struggle under pressure.

Over-Reliance on Automation

Automation has transformed IT operations, but it’s not a safety net for everything.

Auto-scaling, failover mechanisms, and self-healing systems reduce manual effort—but they can also mask underlying issues.

When automation fails:

- Problems escalate faster than expected

- Root causes become harder to identify

- Systems behave unpredictably

In some cases, automation can even worsen outages by reacting incorrectly to abnormal conditions.

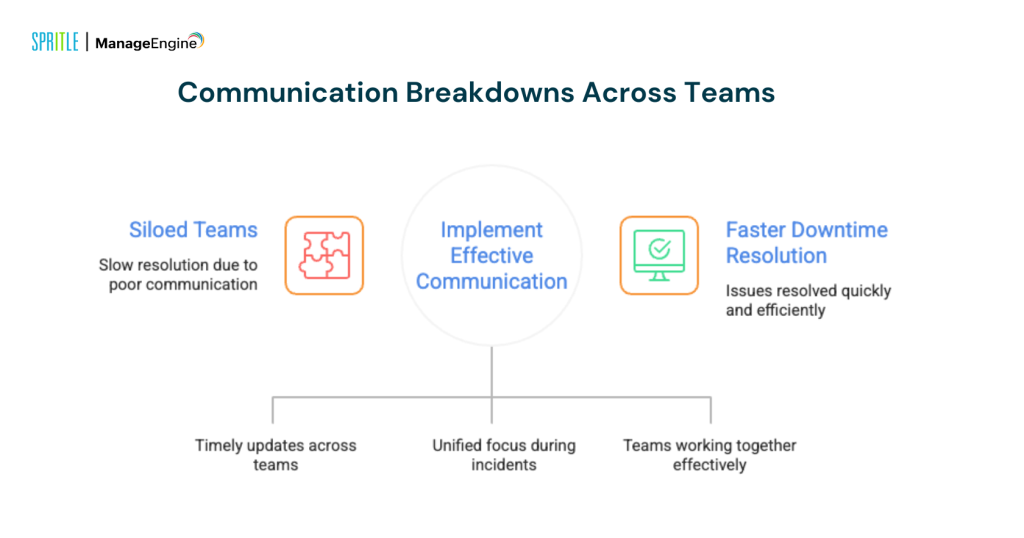

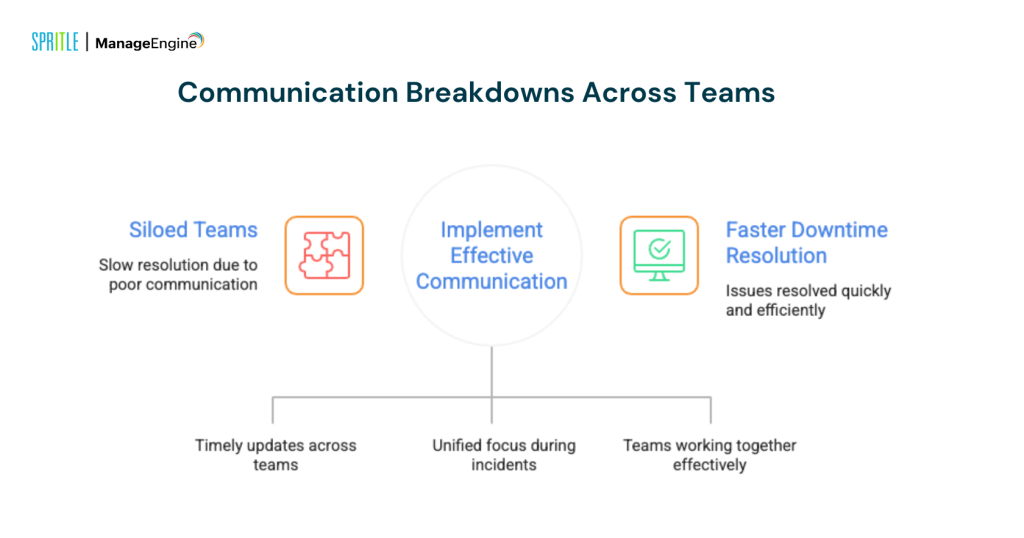

Communication Breakdowns Across Teams

Downtime is rarely confined to a single team. It often spans across development, operations, infrastructure, and support.

When communication isn’t seamless, resolution slows down.

Common communication challenges:

- Siloed teams working independently

- Delayed information sharing

- Misaligned priorities during incidents

Effective communication is just as critical as technical expertise. Without it, even small issues can turn into major outages.

Postmortems Without Action

After every outage, there’s usually a review. Root causes are identified, and lessons are documented.

But documentation alone doesn’t prevent future failures.

The real issue lies here:

- Action items are not implemented

- Process improvements are delayed

- The same risks remain in the system

As a result, similar outages happen again—unexpected, yet avoidable.

What Needs to Change?

Preventing surprise downtime isn’t about adding more tools—it’s about changing how systems are managed.

High-impact improvements include:

- Shifting from reactive alerts to predictive intelligence

- Unifying monitoring for a complete system view

- Continuously testing failure scenarios

- Actively monitoring external dependencies

- Strengthening incident response through regular drills

- Turning postmortem insights into real action

Final Perspective

Downtime is rarely a sudden event. It’s a slow buildup of unnoticed signals, disconnected systems, and unaddressed risks.

What appears as a surprise is often the result of missed connections.

IT teams that move beyond surface-level monitoring and focus on deeper system understanding will be the ones that stay ahead. Because in reality, the goal isn’t just to respond to downtime—it’s to see it coming before it happens.